Variational encoders (VAEs) are generative models, in contrast to typical standard neural networks used for regression or classification tasks. VAEs have diverse applications from generating fake human faces and handwritten digits to producing purely "artificial" music. This post will explore what a VAE is, the intuition behind it and also the tough looking (but quite … Continue reading Variational Autoencoder Explained

Category: Deep Learning

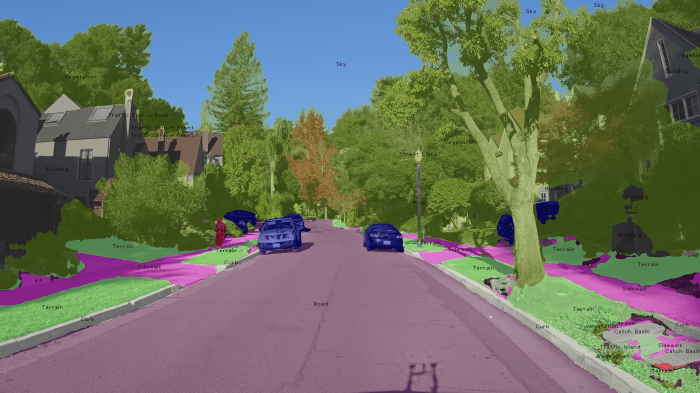

A Look at Image Segmentation using CNNs

Image segmentation is the task in which we assign a label to pixels (all or some in the image) instead of just one label for the whole image. As a result, image segmentation is also categorized as a dense prediction task. Unlike detection using rectangular bounding boxes, segmentation provides pixel accurate locations of objects in … Continue reading A Look at Image Segmentation using CNNs

Paper Explanation: Net2Net – Accelerating Learning via Knowledge Transfer

Motivation One of the biggest challenges during designing new neural network architectures is time. During real-world workflows, one often trains many different neural networks during the experimentation and design process. This is a wasteful process in which each new model is trained from scratch. In a typical workflow, one trains multiple models, with each model … Continue reading Paper Explanation: Net2Net – Accelerating Learning via Knowledge Transfer

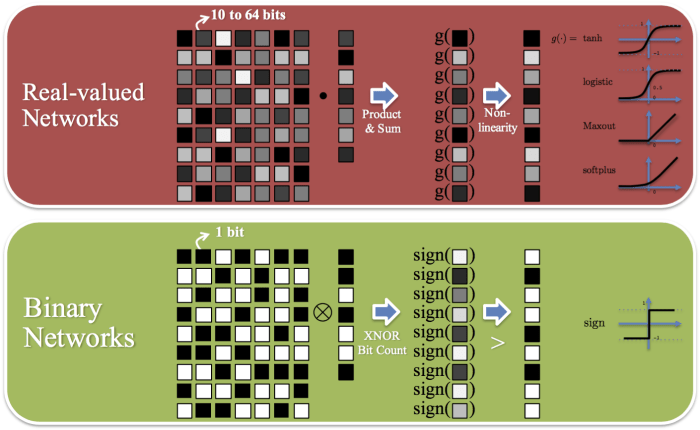

Paper Explanation: Binarized Neural Networks: Training Neural Networks with Weights and Activations Constrained to +1 or −1

Motivation What if you want to do some real-time face detection/recognition using a deep learning system running on a pair of glasses? What if you want your alarm clock to be able to record and analyze your sleep and conditions around you and come up with the most optimal way of waking you up each … Continue reading Paper Explanation: Binarized Neural Networks: Training Neural Networks with Weights and Activations Constrained to +1 or −1

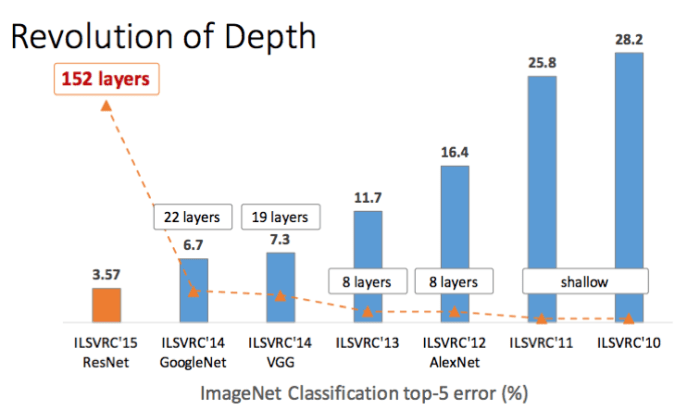

Paper Explanation – Deep Residual Learning for Image Recognition (ResNet)

This paper is the outcome when Microsoft finally released the beast! The ResNet "slayed" everything, and won not one, not two, but five competitions; ILSVRC 2015 Image Classification, Detection and Localization, and COCO 2015 detection and segmentation. Problems the Paper Addressed The paper analysed what was causing the accuracy of deeper networks to drop as … Continue reading Paper Explanation – Deep Residual Learning for Image Recognition (ResNet)

Paper Explanation: Going Deeper with Convolutions (GoogLeNet)

This is the only paper I know of that references a meme! Not only this but this model also became the state of the art for classification and detection in the ImageNet Large-Scale Visual Recognition Challenge 2014 (ILSVRC14). Problems the Paper Addressed To create a deep network with high accuracy while keeping computation low. Google … Continue reading Paper Explanation: Going Deeper with Convolutions (GoogLeNet)