To what extent do the CNN classification generalise to object detection?

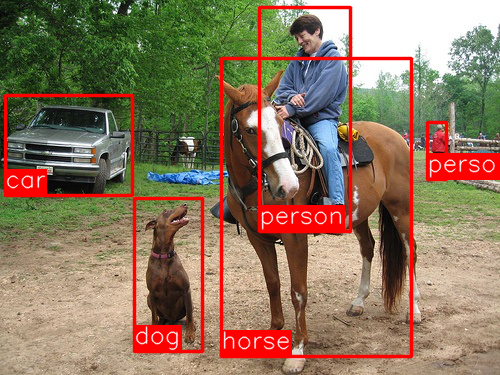

Object detection is the task of finding the different objects in an image and classifying them (as seen in the image above).

This paper is the first to show that a CNN can lead to dramatically higher object detection performance

Let’s now take a moment to understand what led to, Regions With CNNs (R-CNN)

Unlike image classification, detection requires localisation (likely many) objects within an image. A method is to build a sliding-window detector. CNNs have been used in this way for at least two decades. In order to maintain high spatial resolution, these CNNs typically only have two convolution and pooling layers. However, units high up in the network, which have 5 convolution layers, have very large receptive fields (195×195 pixels) and strides (32×32 pixels) in the input image, which makes precise localisation using the sliding-window technique very challenging and difficult to achieve.

To solve this problem, 2000 region-proposals are generated from the input image and only these are get propagated through the CNN. Combining Region Proposals with CNNs, hence, the name, R-CNN: Regions with CNN features.

Understanding the Network Architecture

The object detection system consists of:

- Category-independent region proposals generator. These define the set of candidate detections available to the detector.

- A large CNN that extracts a fixed-length feature vector from each region.

- A set of class specific linear SVMs.

- Per-class bounding-box regressors which are used to reduce localisation errors.

Region Proposal:

Selective-search has been used in the paper. It is also mentioned that the “R-CNN is agnostic to the particular region proposal method” used. Hence, the results shouldn’t change much if a different region-proposal method is used. The algorithm looks for “blobby” image regions that are likely to contain objects. The outputs are 2000 region-proposals which are class independent.

Feature Extraction

Each region-proposal, after mean subtraction, is forward propagated through a five convolutional layers and two fully connected layers. These generates a 4096-dimensional feature vector.

Each region-proposal, after mean subtraction, is forward propagated through a five convolutional layers and two fully connected layers. These generates a 4096-dimensional feature vector.

As the size of each region-proposal is variable and the CNN is designed to take in a 227×227 pixel image, each region-proposal is warped in a tight bounding box, regardless od the size or aspect-ratio, to the required size. In the paper it is mentioned that prior to warping, the bounding box is dilated so that at the warped size there are exactly p pixels of warped image context around the original box (p = 16 is used in the paper). This helps improve the performance.

SVM Classifiers

One binary SVM per class is used to classify each region-proposal. The class with the highest score for a region-proposal is assigned to that region. The 4096-dimensional feature vector from the CNN is input to the SVM. In practice, the feature matrix (instead of a vector) is typically 2000×4096 and the SVM weight matrix is 4096xN where N is the number of classes. Given all scored regions in an image, greedy non-maximum suppression (for each class independently) is applied that rejects a region if it has an intersection-overunion (IoU) overlap with a higher scoring selected region larger than a learned threshold.

Bounding-Box Regressors

These are basically a linear regression model per class to predict a new detection window given the pool5 features (from the CNN just before the first fully connected layers) for a selective search region proposal. This not required to do detection and just using the SVM would have sufficed. However, the region-proposals are not always completely accurate and this helps correct any defects. Performance was increased after using this.

R-CNN Training

The training pipeline for R-CNN is pretty complex and messy!

Supervised pre-training

The CNN is pre-trained on the ILSVRC2012 classification dataset (bounding box labels are not available for this data). This is equivalent to using an already trained model such Alex-Net, VGG-19, etc.

Domain-specific fine-tuning

Instead of the 1000 ImageNet classes 20 object classes + background are required. Hence, the final fully-connected layer is removed and replaced with a randomly initialised (N + 1)-way classification layer (where N is the number of object classes, plus 1 for background).

To adapt the CNN to the new task (detection) and the new domain (warped proposal windows), SGD training of the CNN is continued using only warped region-proposals. All region proposals with >= 0.5 IoU overlap with a ground-truth box are considered as positives for the box’s class and the rest as negatives. The ground-truth box here is agnostic to the classes.

In each SGD iteration uniformly sample 32 positive windows (over all classes) and 96 background windows to construct a minni-batch of size 128.

Object category classifiers (SVMs) Training

A binary SVM is trained for each class.

All region proposals with >= 0.3 IoU overlap with a ground-truth box are considered as positives for the class and the rest as negatives. The IoU threshold used to train the SVMs is different to that used to fine-tune the CNN. Another thing to note is that, in case of fine-tuning the CNN the ground-truth boxes for all the classes were considered but while training an SVM for a particular class only ground-truth box for that particular class is considered.

The final fully-connected layer (introduced during fine-tuning the CNN) is removed and the region-proposals (both positive and negative) are forward propagated to generate a 4096-dimensional feature vector.

Once features are extracted and training labels are applied, we optimise the linear SVM for that class.

Training the Bounding-Box Regressor

A simple bounding-box regression stage is used to improve localisation performance. After scoring each selective search proposal with a class-specific detection SVM, a new bounding box is predicted for the detection using a class-specific bounding-box regressor. The input to this are outputs of the Pool5 layer of the CNN. Training is done by minimising the L2 loss.

TL;DR

Inputs: Image

Outputs: Bounding boxes + labels for each object in the image.

If you see any errors or issues in this post, please contact me at and I”ll immediately correct them!

Link to the paper: https://arxiv.org/pdf/1311.2524.pdf

Mohit Jain

One thought on “Paper Explanation: Rich feature hierarchies for accurate object detection and semantic segmentation (R-CNN)”